Edge AI • Predictive maintenance • No cloud dependency

Critical equipment doesn’t fail on schedule. It fails when conditions drift, when signals look “almost normal” until they suddenly aren’t. Edge AI monitoring brings real-time detection and diagnostics next to the machine—so you can react early even when connectivity is limited, restricted, or unreliable.

This guide explains what “AI at the edge” really means in industrial monitoring, why cloud dependency can be risky for critical assets, and how to deploy a system that is offline-capable, secure, and actionable—not just a dashboard.

What Edge AI monitoring is (and what it isn’t)

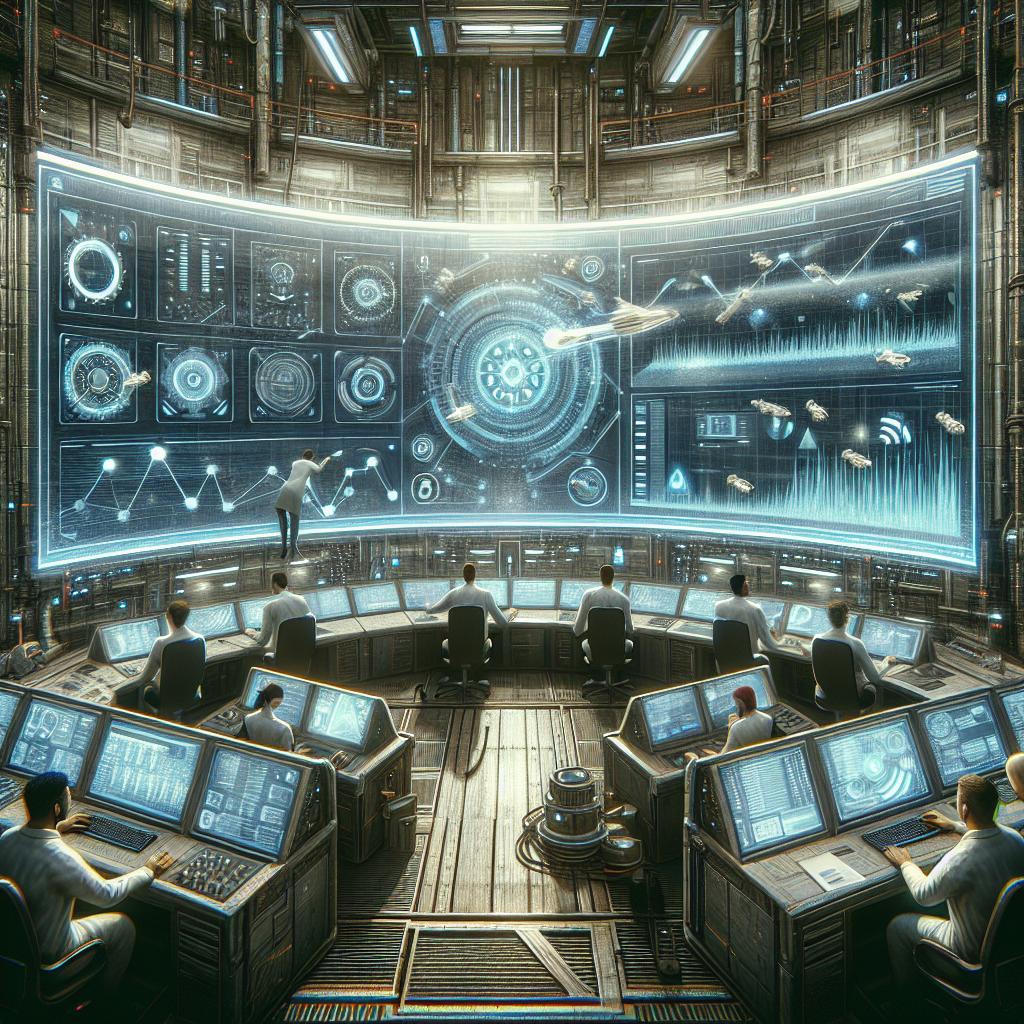

Edge AI monitoring means running anomaly detection, diagnostics, and health scoring close to the equipment—on an on‑premise gateway, industrial PC, or embedded device—so decisions can happen where the signals are produced.

It’s not “a cloud dashboard with IoT charts.” It’s a monitoring layer that can keep working under real constraints: limited bandwidth, intermittent connectivity, data residency requirements, or even air‑gapped environments.

In practical terms: Edge AI turns raw signals into actions your team can execute.

- Detect deviations early (before a breakdown forces downtime).

- Prioritize risk (so maintenance focuses on what matters most).

- Trigger next steps (alerts, tickets, work orders, or operational recommendations).

What “no cloud dependency” really means

“No cloud dependency” doesn’t have to mean “no cloud at all.” It means the system is designed so that core monitoring continues without the cloud.

Edge-first, cloud-optional architecture

The edge handles what must be immediate and always-on. The cloud (if used) supports what can be delayed or aggregated.

- Local inference: anomaly detection and health scoring run on-site.

- Local storage: critical events are stored locally so nothing is lost during outages.

- Optional sync: periodic upload of summaries (not raw sensitive data) when allowed.

- Controlled updates: model updates can be scheduled, approved, and auditable—especially in restricted networks.

This approach keeps you resilient. If the internet goes down, monitoring doesn’t. If the cloud is restricted by policy, compliance, or cost, you still get real-time protection.

Why companies move monitoring from cloud-only to edge-first

Cloud platforms are powerful for central analytics, fleet learning, and long-term aggregation. But for critical equipment, cloud-only monitoring often creates operational friction: latency, connectivity risk, cost uncertainty, and security constraints.

Typical edge-first drivers

- Reliability: continuous monitoring even with unstable networks.

- Speed: decisions closer to the machine—useful when minutes matter.

- Data control: sensitive telemetry stays on-site by default.

- Lower bandwidth: transmit insights instead of raw high-frequency streams.

- Operational autonomy: sites remain protected without depending on external services.

If your monitoring must be always-on and actionable, edge-first is usually the cleanest design. If you also want fleet-level learning, you can add cloud as a second layer—without turning it into a single point of failure.

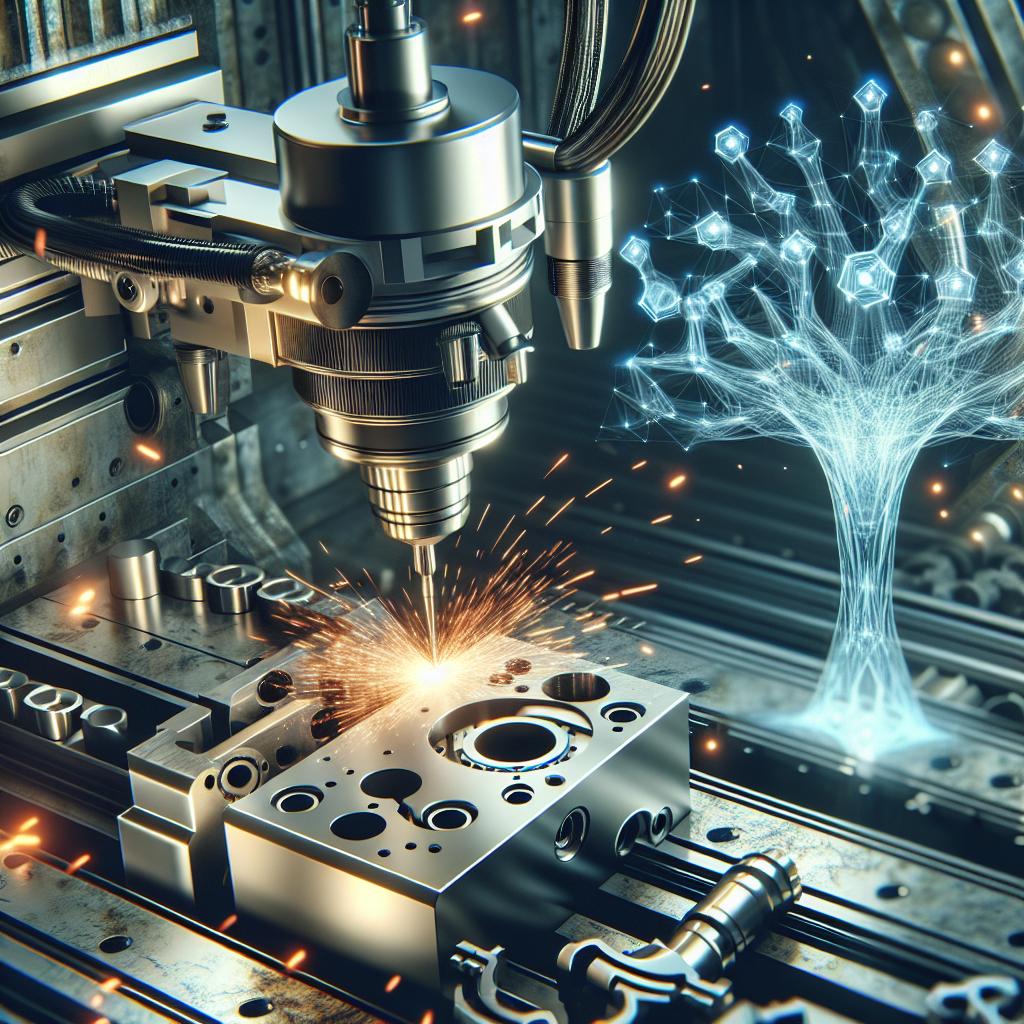

Signals & data: what you can monitor

Edge AI monitoring works best when you combine signals that reflect real equipment behavior. You don’t need “perfect data”—but you do need signals that move when the machine moves.

Common data sources in critical equipment monitoring

- Vibration: bearings, imbalance, misalignment, looseness, resonance.

- Temperature: overheating, friction, insulation issues, abnormal thermal patterns.

- Electrical current & power: motor load changes, mechanical drag, efficiency drift.

- Pressure & flow: pumps, compressors, valves, cavitation, blockages.

- Acoustic / ultrasound: leaks, arcing, early mechanical faults.

- SCADA / PLC tags: setpoints, cycles, alarms, states, production context.

Tip: the fastest wins often come from combining a few strong signals with the right operational context (load, regime, recipe, shift, ambient conditions). That reduces false alarms and increases trust.

How an Edge AI monitoring system works

A reliable deployment is not “just a model.” It’s a full loop: capture → detect → explain → act → learn.

1) Capture & normalize signals

Pull data from sensors, PLC/SCADA tags, historians, or edge collectors. Normalize timestamps, clean obvious noise, and align signals to the equipment’s operating modes (idle vs load, startup vs steady state).

2) Detect anomalies (in real time)

Use anomaly detection to flag behavior that deviates from the equipment’s baseline. For many assets, anomaly detection is the best starting point because it works even when failures are rare.

3) Convert detection into actionable insights

A good system doesn’t just say “something is wrong.” It answers: what changed, how severe it is, which component is likely involved, and what to do next.

4) Trigger the workflow (alerts, tickets, work orders)

Monitoring becomes valuable when it integrates into your operations: alerts to the right channel, tickets with context, work orders with asset IDs, and clear thresholds for escalation.

5) Improve continuously (without breaking production)

You keep the system trustworthy by managing drift, updating baselines, reviewing false positives/negatives, and improving rules and models over time—on a controlled release rhythm.

Need end-to-end integration? If this monitoring must connect into your real systems, these pages explain how Bastelia delivers production-grade implementation:

• AI Integration Services & Implementation

• Data, BI & Analytics

• AI Consulting & Implementation Services

• AI Solutions for Business

Implementation roadmap (step-by-step)

The quickest way to get value is to start narrow: one site, one critical asset group, one workflow. Prove the KPI impact, then scale by reuse.

Step 1 — Define the critical assets and failure modes

Start with the equipment that creates the biggest operational risk: downtime, safety, production loss, or expensive repairs.

Step 2 — Audit signals and access

- Which signals exist today (SCADA tags, vibration, temperature, current)?

- Where does the data live (historian, PLC, gateway, files)?

- What constraints apply (air-gapped, limited bandwidth, data residency)?

Step 3 — Design the edge architecture

Decide where inference runs (gateway/industrial PC), how data is buffered locally, and how alerts flow to teams. The key is predictable operations: clear permissions, logs, and safe fallbacks.

Step 4 — Build a pilot with measurable outcomes

The pilot should produce something your team can actually use: a reliable alerting logic, asset-level health scoring, and an integration path into maintenance execution.

Step 5 — Integrate into the maintenance process

If insights stay outside the workflow, adoption drops. Integrate alerts into the tools your teams already use. Align thresholds with operational reality (night shift vs day shift, critical lines vs non-critical).

Step 6 — Rollout and standardize

Once the pilot is trusted, scale with a template: connectors, model packaging, monitoring dashboards, runbooks, and a repeatable onboarding checklist for new assets.

KPIs to prove ROI (without guessing)

The best monitoring projects don’t sell “AI”. They sell measurable operational improvement. Choose KPIs that your organization already respects.

Common KPI set for critical equipment monitoring

- Unplanned downtime hours (and cost per hour if you track it).

- OEE impact (if you operate with OEE discipline).

- MTBF / MTTR improvements (reliability & repair speed).

- Maintenance cost per asset (especially emergency interventions).

- False alarm rate + time-to-trust (adoption KPI).

A simple rule: If you can’t measure baseline performance, you can’t prove improvement. Start by capturing “before” for 2–6 weeks, then measure “after” once alerts and workflows are live.

Common mistakes (and how to avoid them)

Mistake 1 — Monitoring without a response plan

If the system detects issues but the team doesn’t know what to do next, alerts become noise. Fix: define response playbooks (triage → verify → action → escalation).

Mistake 2 — No operating-mode context

Many false positives come from comparing apples to oranges (startup vs steady state, low load vs high load). Fix: include operating regimes and context variables in detection logic.

Mistake 3 — Integration as an afterthought

Insights stuck in a separate dashboard rarely change outcomes. Fix: integrate alerts with SCADA views, ticketing, and maintenance execution tools.

Mistake 4 — Overpromising “full prediction” too early

For many assets, the first real win is reliable anomaly detection and triage—not perfect failure prediction. Fix: start with detection + actionability, then expand into prognosis (RUL) when data supports it.

Costs & pricing models (what drives budget)

Budgets vary by industry and constraints, but the drivers are consistent. The goal is to invest where it changes outcomes—not where it adds complexity.

What usually drives cost

- Signal availability: adding sensors vs using what already exists.

- Edge infrastructure: gateways/industrial PCs + local storage.

- Integration work: SCADA/historian/CMMS/ERP connections.

- Modeling approach: anomaly detection vs asset-specific diagnostics vs RUL.

- Governance: access control, audit logs, update approvals, documentation.

Practical way to start: run a focused pilot on 1–2 asset types. Email info@bastelia.com with your equipment list, available signals, and constraints, and we’ll outline the most realistic implementation path.

Alternatives: edge-only vs cloud-only vs hybrid

There isn’t one “correct” architecture for every company. What matters is matching the architecture to your constraints.

Edge-only (fully on-premise)

Best when connectivity is restricted/air-gapped and data must remain on-site. You still get real-time monitoring and local analytics.

Cloud-only

Useful for centralized analytics when connectivity is reliable and latency is not critical. Often simplest for broad fleet dashboards—but can be risky for always-on critical monitoring.

Hybrid (edge-first, cloud optional)

Often the most practical: edge handles immediate detection; cloud supports fleet learning, long-term trends, and multi-site reporting—without turning the cloud into a single point of failure.

FAQs

What is Edge AI monitoring for critical equipment?

It’s the use of AI models deployed close to the asset (on-premise gateways or industrial PCs) to detect anomalies, assess equipment health, and trigger actions in real time—without needing continuous cloud connectivity.

Does it work if the internet goes down?

Yes—if designed correctly. In an edge-first system, the monitoring and alerting loop runs locally. Connectivity is optional for syncing summaries or enabling centralized reporting, not for core detection.

What data do we need to start?

Many projects start with existing SCADA/PLC tags plus 1–2 strong physical signals (vibration, temperature, current). If historical failure data is limited, anomaly detection can still be effective because it learns “normal” behavior first.

How do you reduce false alarms?

By combining signals with operating context (load, regime, production state), tuning thresholds with your team, and using a clear escalation model (informational alerts vs maintenance-required vs critical stop). Trust comes from stable rules, good context, and consistent triage.

Can it integrate with SCADA, MES, CMMS, or ERP?

Yes. The most valuable deployments connect insights to the workflow: SCADA views for operators, tickets/work orders for maintenance, and reporting for management. Integration is usually where ROI becomes visible.

Can we keep all data on-premise for security or compliance?

Yes. You can run edge-only deployments where data stays on-site by default. If you later add cloud, you can sync only what’s allowed (aggregates, features, event summaries) with controlled access and audit logs.

How long does a pilot usually take?

It depends on signal availability and integration complexity, but a pilot is typically structured around: defining the asset scope, validating signals, deploying edge inference, and proving KPI impact with real alerts.

How do you update models in air-gapped or restricted sites?

With controlled, auditable updates: packaging models as versioned releases, scheduling maintenance windows, and validating performance before promotion. Updates can be delivered offline if needed.

This content is general information and does not constitute technical or legal advice. For a recommendation tailored to your site constraints, email info@bastelia.com.

Want a realistic plan (not a generic pitch)?

If you share your equipment types, available signals, connectivity constraints, and target KPIs, we can propose a practical edge-first monitoring approach with clear next steps.